Hey Everyone,

In terms of actionable LLMs trained on a specific field, I was especially excited about the BloombergGPT paper. So what’s going on here?

Will A.I. be implicated in the future of investing, finance and economics itself? Bloomberg is bringing to finance what GPT and ChatGPT brought to everyday general purpose chatbots. Or at least it’s trying.

In the new paper BloombergGPT: A Large Language Model for Finance, a research team from Bloomberg and Johns Hopkins University presents BloombergGPT, a 50 billion parameter language model trained on a 700 billion token dataset that significantly outperforms current benchmark models on financial tasks.

The hope here is that:

Models trained using solely domain-specific data outperformed general-purpose LLMs on tasks inside particular disciplines, such as science and medicine, despite being substantially smaller.

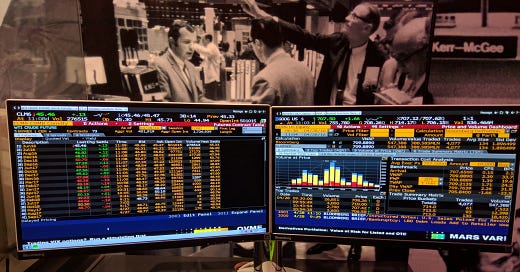

Bloomberg’s Terminal has been the go-to resource for the trading and financial world for financial market data for over four decades. It’s also very very expensive, so what if it just got a huge upgrade by A.I.?

Models with 280 billion, 540 billion, and 1 trillion parameters were quickly created after GPT-3. The leap from GPT-2 to GPT-5 is going to be significant in the history of A.I. Now nearing mid 2023, LLMs are coming out each week, weird ones and hopefully useful ones but mostly just stepping stones to new ones.

On Friday, March 31st, Bloomberg announced that it had launched a large-scale artificial intelligence model that has been trained on financial data to improve preexisting natural language processing tasks, such as sentiment analysis and news classification.

If Data was the new oil, what are LLMs, the new electricity?

Bloomberg researchers pioneered a mixed approach that combines both finance data with general-purpose datasets to train a model that achieves best-in-class results on financial benchmarks, while also maintaining competitive performance on general-purpose LLM benchmarks.

To achieve this milestone, Bloomberg’s ML Product and Research group collaborated with the firm’s AI Engineering team to construct one of the largest domain-specific datasets yet, drawing on the company’s existing data creation, collection, and curation resources.

As a financial data company, Bloomberg’s data analysts have collected and maintained financial language documents over the span of forty years. The team pulled from this extensive archive of financial data to create a comprehensive 363 billion token dataset consisting of English financial documents.

This data was augmented with a 345 billion token public dataset to create a large training corpus with over 700 billion tokens. Using a portion of this training corpus, the team trained a 50-billion parameter decoder-only causal language model.

The resulting model was validated on existing finance-specific NLP benchmarks, a suite of Bloomberg internal benchmarks, and broad categories of general-purpose NLP tasks from popular benchmarks (e.g., BIG-bench Hard, Knowledge Assessments, Reading Comprehension, and Linguistic Tasks).

Notably, the BloombergGPT model outperforms existing open models of a similar size on financial tasks by large margins, while still performing on par or better on general NLP benchmarks.

If Data was the new oil, what are LLMs, the new electricity?

Let’s get some more detail on this:

Keep reading with a 7-day free trial

Subscribe to Artificial Intelligence Learning 🤖🧠🦾 to keep reading this post and get 7 days of free access to the full post archives.