Hey Everyone,

This week I’d like to talk about the new foundational video model by Twelve Labs.

Twelve Labs on October 23rd, 2023 announced their latest video-language foundation model Pegasus-1 along with a new suite of Video-to-Text APIs (Gist API, Summary API, Generate API). They are a startup founded in 2021 with already $27 million in pre-Series A funding.

What the model can do is mind blowing!

What is Pegasus-1?

The New Model: Pegasus-1 has approximately 80B parameters with three model components jointly trained together: video encoder, video-language alignment model, language decoder.

Dataset: Twelve Labs has collected over 300 million diverse, carefully-curated video-text pairs, making it one of the largest video-text corpora there is for video-language foundation model training. This technical report is based on the initial training run conducted on a 10% subset consisting of 35M video-text pairs and over 1B image-text pairs.

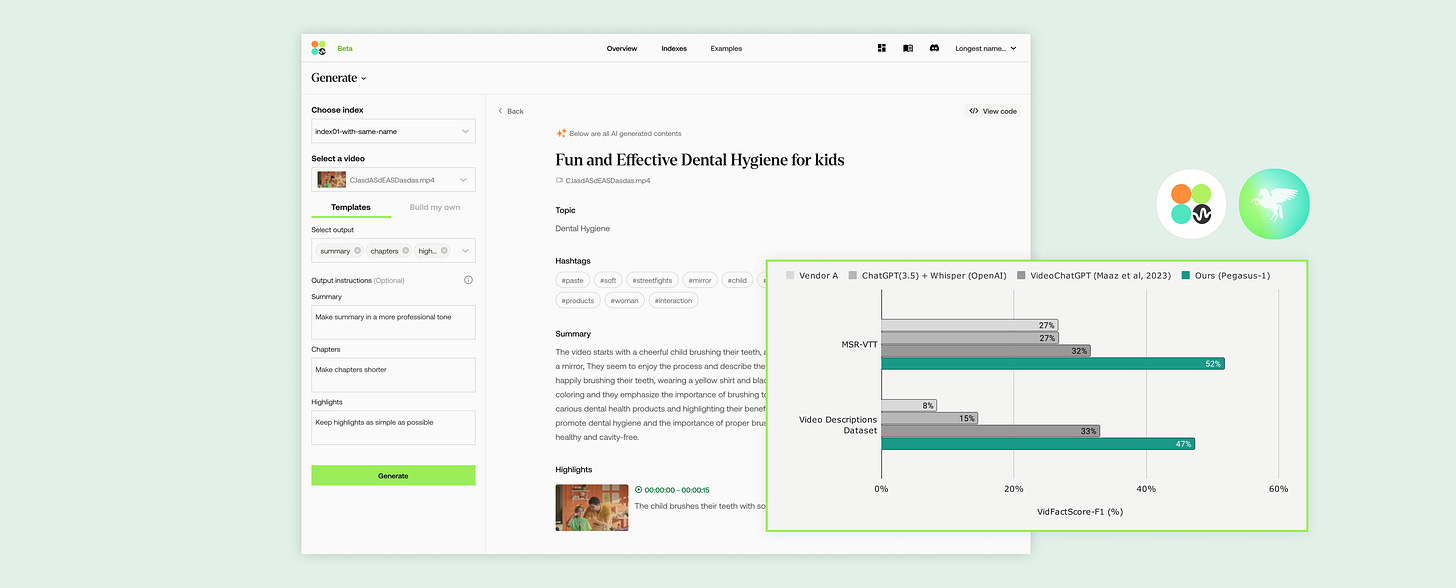

Performance against SOTA video-language model: Compared to the previous state-of-the-art (SOTA) video-language model, Pegasus-1 shows a 61% relative improvement on the MSR-VTT Dataset (Xu et al., 2016) and 47% enhancement on the Video Descriptions Dataset (Maaz et al., 2023) as measured by the QEFVC Quality Score (Maaz et al., 2023). Evaluating on VidFactScore (our proposed evaluation metric), it shows 20% absolute F1-score increase on the MSR-VTT Dataset and 14% enhancement on the Video Description dataset.

Performance against ASR+LLM models: ASR+LLM is a widely-adopted approach for tackling video-to-text tasks. Compared to Whisper-ChatGPT (OpenAI) and a leading commercial ASR+LLM product, Pegasus-1 outperforms by 79% on MSR-VTT and 188% on the Video Descriptions dataset. Evaluating on VidFactScore-F1, it shows 25% absolute gains on the MSR-VTT Dataset and 33% on the Video Description dataset.

API access to Pegasus-1: Here is the link for the waitlist for Pegasus-powered Video-to-Text APIs.

Keep reading with a 7-day free trial

Subscribe to Artificial Intelligence Learning 🤖🧠🦾 to keep reading this post and get 7 days of free access to the full post archives.